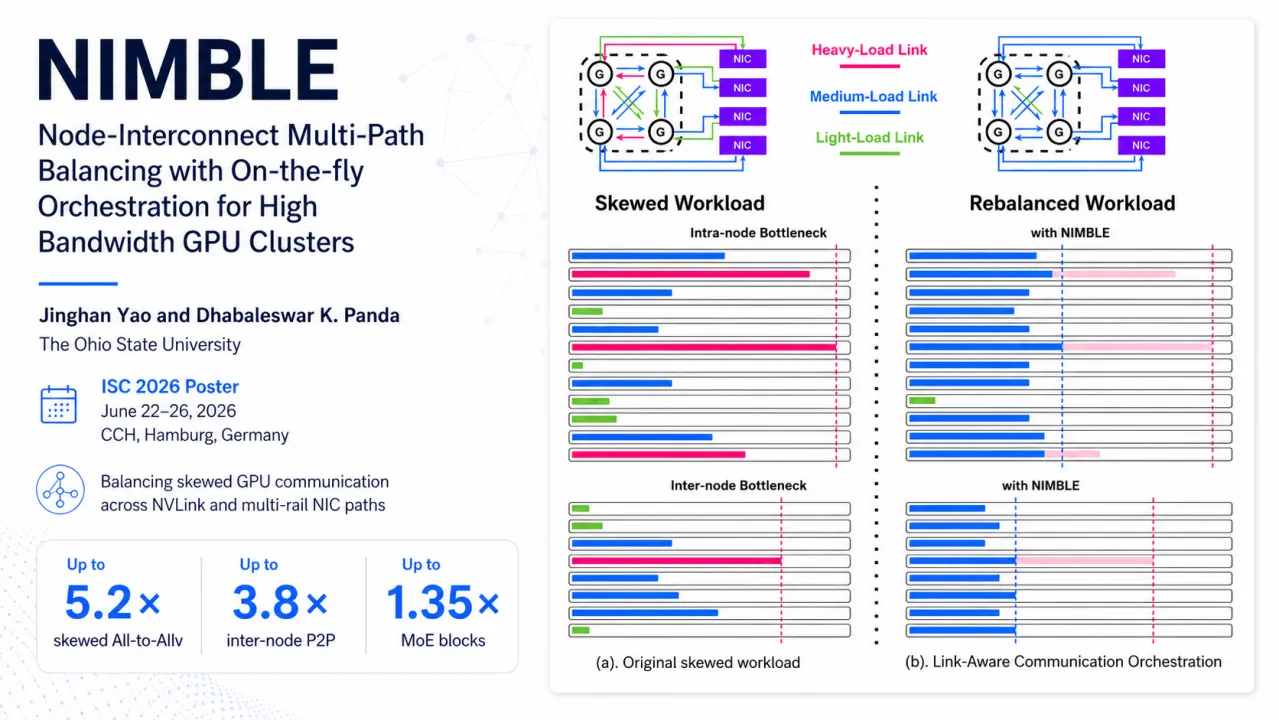

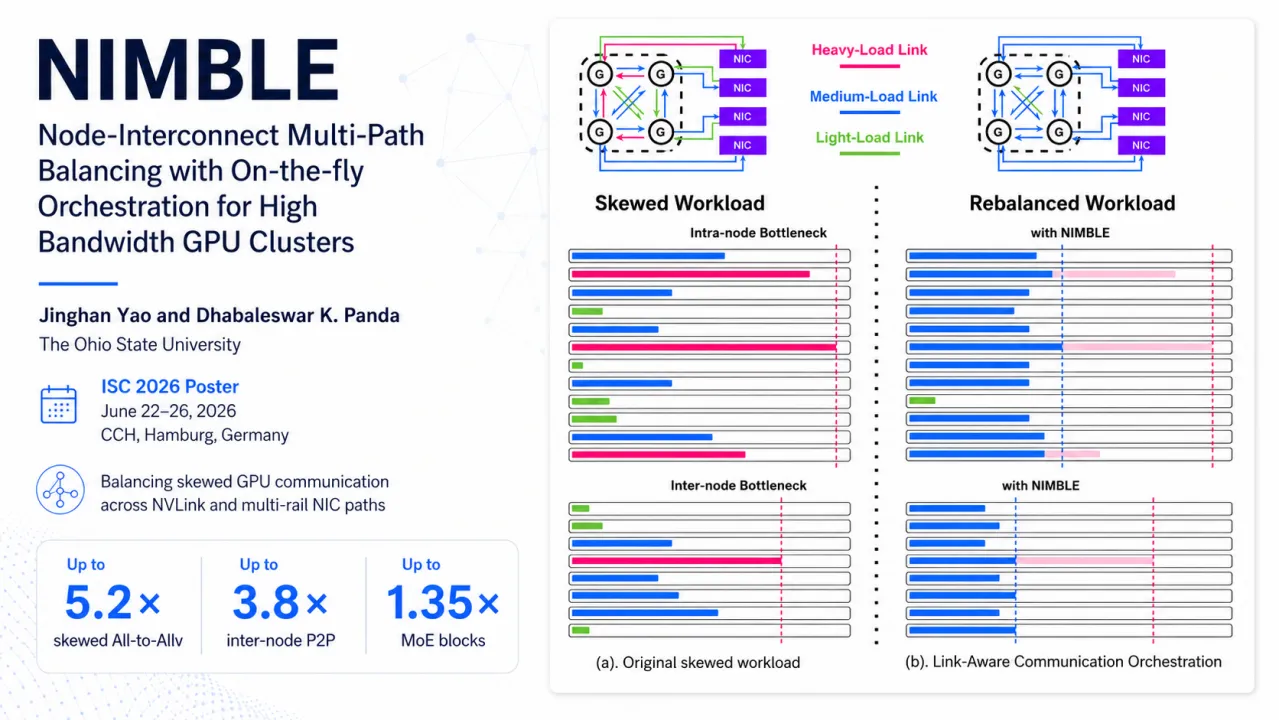

NIMBLE: Node-Interconnect Multi-Path Balancing with On-the-fly Orchestration for High Bandwidth GPU Clusters

Wednesday, June 24, 2026 3:45 PM to 5:15 PM · 1 hr. 30 min. (Europe/Berlin)

Foyer D-G - 2nd Floor

Research Poster

HW and SW Design for Scalable Machine LearningLarge Language Models and Generative AI in HPCNetworking and Interconnects

Information

Poster is on display and will be presented at the poster pitch session.

Modern GPU clusters offer massive communication bandwidth through high-speed connections like NVLink (within servers) and InfiniBand or RoCE (between servers). However, real-world applications often waste this capacity because traffic gets concentrated on just a few links while others remain unused. This imbalance creates bottlenecks, causes latency spikes, and prevents applications from scaling well, even though plenty of total bandwidth is available.

Current communication libraries like NCCL and MPI rely on fixed routing strategies that cannot adapt when traffic patterns change unexpectedly. When workloads have uneven communication demands—common in AI models like Mixture-of-Experts, graph analytics, or recommendation systems—some network links become congested while others sit idle, leading to chronic performance problems.

We introduce NIMBLE, a runtime system that dynamically redistributes network traffic to balance load across all available paths. NIMBLE monitors link utilization in real-time and uses a fast optimization algorithm to route data through less congested paths, including forwarding through intermediate GPUs and utilizing multiple network interfaces when beneficial. It integrates transparently with existing communication libraries without requiring application changes, while preserving message ordering and maintaining low overhead.

On clusters with NVIDIA H100 GPUs, NIMBLE achieves up to 2.3× higher bandwidth for within-node communication and 3.8× higher throughput between nodes compared to single-path approaches. For imbalanced workloads like sparse data exchanges in AI models, it outperforms standard libraries by up to 5.2×, while delivering 1.35× speedups on end-to-end large language model training tasks with Mixture-of-Experts architectures. Under balanced traffic conditions, NIMBLE matches the performance of existing approaches.

Contributors:

Modern GPU clusters offer massive communication bandwidth through high-speed connections like NVLink (within servers) and InfiniBand or RoCE (between servers). However, real-world applications often waste this capacity because traffic gets concentrated on just a few links while others remain unused. This imbalance creates bottlenecks, causes latency spikes, and prevents applications from scaling well, even though plenty of total bandwidth is available.

Current communication libraries like NCCL and MPI rely on fixed routing strategies that cannot adapt when traffic patterns change unexpectedly. When workloads have uneven communication demands—common in AI models like Mixture-of-Experts, graph analytics, or recommendation systems—some network links become congested while others sit idle, leading to chronic performance problems.

We introduce NIMBLE, a runtime system that dynamically redistributes network traffic to balance load across all available paths. NIMBLE monitors link utilization in real-time and uses a fast optimization algorithm to route data through less congested paths, including forwarding through intermediate GPUs and utilizing multiple network interfaces when beneficial. It integrates transparently with existing communication libraries without requiring application changes, while preserving message ordering and maintaining low overhead.

On clusters with NVIDIA H100 GPUs, NIMBLE achieves up to 2.3× higher bandwidth for within-node communication and 3.8× higher throughput between nodes compared to single-path approaches. For imbalanced workloads like sparse data exchanges in AI models, it outperforms standard libraries by up to 5.2×, while delivering 1.35× speedups on end-to-end large language model training tasks with Mixture-of-Experts architectures. Under balanced traffic conditions, NIMBLE matches the performance of existing approaches.

Contributors:

Format

on-demandon-site