Rethinking Compute Platforms: When Cloud Can Replace HPC, and When It Cannot

Wednesday, June 24, 2026 3:45 PM to 5:15 PM · 1 hr. 30 min. (Europe/Berlin)

Foyer D-G - 2nd Floor

Research Poster

Bioinformatics and Life SciencesChemistry and Materials ScienceEmerging Computing TechnologiesHPC in the Cloud and HPC ContainersPerformance Measurement

Information

Poster is on display and will be presented at the poster pitch session.

Traditional HPC (tHPC) infrastructure deployment requires weeks to months for hardware procurement, network configuration, and software integration, limiting agility for short-term projects and hampering reproducibility through non-standardized configurations. Infrastructure-as-Code (IaC) enables rapid, version-controlled cluster deployment, but systematic evaluation of production-grade open-source IaC frameworks for communication-intensive workloads remains limited. Prior virtualization research demonstrates 5-10% single-node overhead but significant multi-node scaling challenges due to network latency. This work benchmarks virtual HPC clusters deployed via Magic Castle within Germany's InHPC-DE project, evaluating open-source IaC solutions rather than proprietary offerings like AWS ParallelCluster or Azure CycleCloud.

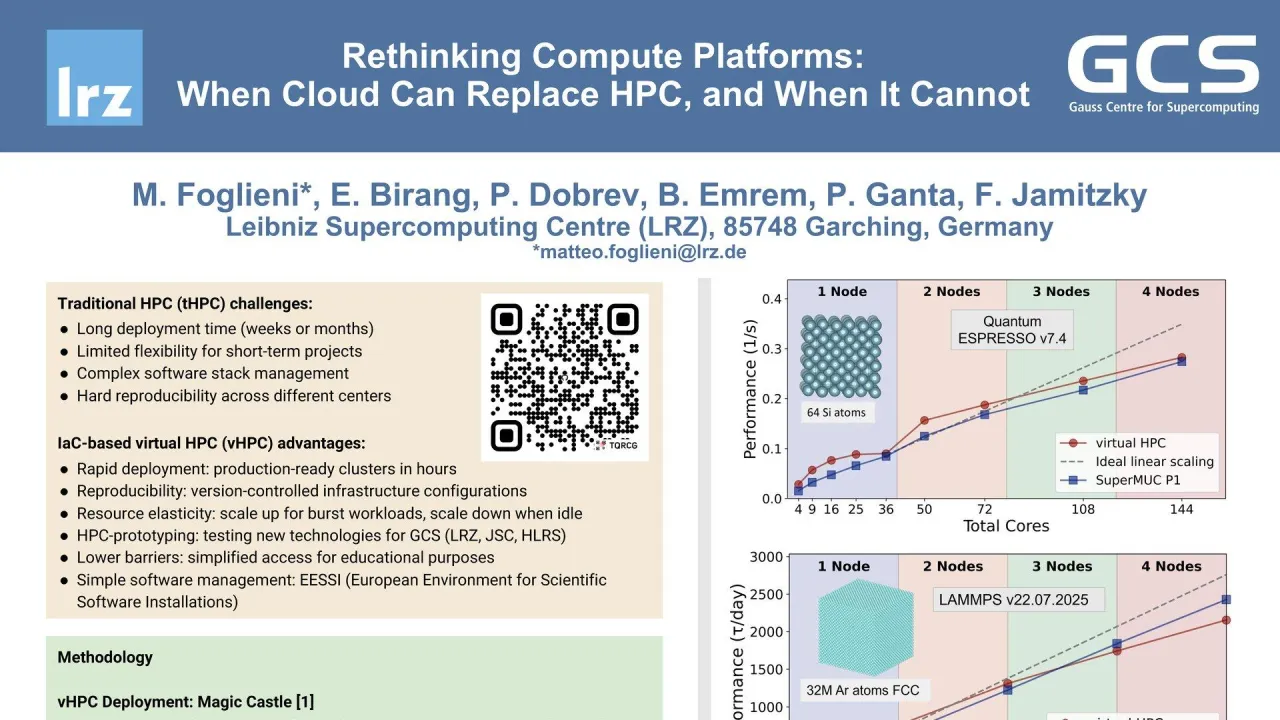

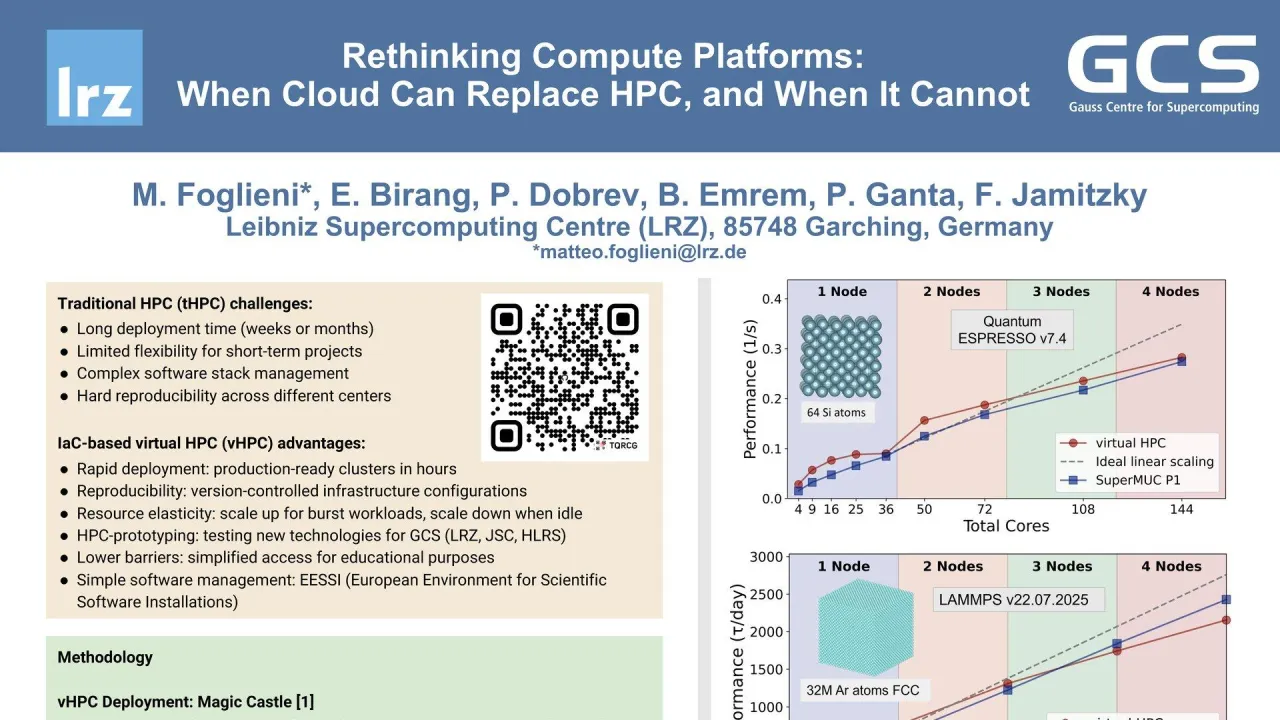

We deployed a virtual HPC (vHPC) cluster using Magic Castle, an open-source Terraform-based IaC framework, and compared performance against SuperMUC-NG Phase 1, a traditional bare-metal HPC system. Four representative biophysical simulation codes were benchmarked: Quantum ESPRESSO v7.4.1 for plane-wave Density-Functional Theory (DFT) calculations on 64-atom silicon systems; GROMACS v2025 for all-atom molecular dynamics (MD) of 11-million-atom ethanol-water mixtures; LAMMPS v22.07.2025 for classical MD with 32 million Argon atoms; and CP2K v2025.1 for DFT-based MD of 64 water molecules.

At single-node and low core counts, virtual HPC performance closely matches bare-metal systems for all four codes, indicating minimal baseline computational overhead. However, GROMACS and CP2K exhibited significant multi-node scaling degradation for communication-intensive workloads, with large efficiency drops compared to bare-metal systems. Detailed profiling revealed that inter-node network latency, not CPU capacity, drives virtualization overhead. Measured network bandwidth on the vHPC system was only a few Gbit/s with latency approximately 1 ms, far from nominal 100 Gbit/s Ethernet specifications and typical bare-metal latency of less than 1 microsecond. This bottleneck particularly affects MPI collective operations dominating GROMACS and CP2K scaling behavior, while CPU virtualization overhead remains negligible at less than 5%.

These results demonstrate that IaC-based virtual HPC delivers production-ready performance for scientific workloads with moderate communication requirements. The ability to provision fully-configured HPC environments in hours represents a significant operational improvement for workflows requiring rapid deployment, reproducibility, and resource elasticity. vHPC is immediately viable for burst computing, education and training, benchmarking, development workflows, and multi-site federation. Network optimization through software approaches or hardware solutions offers pathways to reduce the performance gap. The InHPC-DE project of GCS (Gauss Centre for Supercomputing) validates technical feasibility of large-scale vHPC adoption for building a federated infrastructure. Future work will extend evaluation to GPU-accelerated workloads, container-based isolation strategies, and cross-provider workload migration capabilities.

Contributors:

Traditional HPC (tHPC) infrastructure deployment requires weeks to months for hardware procurement, network configuration, and software integration, limiting agility for short-term projects and hampering reproducibility through non-standardized configurations. Infrastructure-as-Code (IaC) enables rapid, version-controlled cluster deployment, but systematic evaluation of production-grade open-source IaC frameworks for communication-intensive workloads remains limited. Prior virtualization research demonstrates 5-10% single-node overhead but significant multi-node scaling challenges due to network latency. This work benchmarks virtual HPC clusters deployed via Magic Castle within Germany's InHPC-DE project, evaluating open-source IaC solutions rather than proprietary offerings like AWS ParallelCluster or Azure CycleCloud.

We deployed a virtual HPC (vHPC) cluster using Magic Castle, an open-source Terraform-based IaC framework, and compared performance against SuperMUC-NG Phase 1, a traditional bare-metal HPC system. Four representative biophysical simulation codes were benchmarked: Quantum ESPRESSO v7.4.1 for plane-wave Density-Functional Theory (DFT) calculations on 64-atom silicon systems; GROMACS v2025 for all-atom molecular dynamics (MD) of 11-million-atom ethanol-water mixtures; LAMMPS v22.07.2025 for classical MD with 32 million Argon atoms; and CP2K v2025.1 for DFT-based MD of 64 water molecules.

At single-node and low core counts, virtual HPC performance closely matches bare-metal systems for all four codes, indicating minimal baseline computational overhead. However, GROMACS and CP2K exhibited significant multi-node scaling degradation for communication-intensive workloads, with large efficiency drops compared to bare-metal systems. Detailed profiling revealed that inter-node network latency, not CPU capacity, drives virtualization overhead. Measured network bandwidth on the vHPC system was only a few Gbit/s with latency approximately 1 ms, far from nominal 100 Gbit/s Ethernet specifications and typical bare-metal latency of less than 1 microsecond. This bottleneck particularly affects MPI collective operations dominating GROMACS and CP2K scaling behavior, while CPU virtualization overhead remains negligible at less than 5%.

These results demonstrate that IaC-based virtual HPC delivers production-ready performance for scientific workloads with moderate communication requirements. The ability to provision fully-configured HPC environments in hours represents a significant operational improvement for workflows requiring rapid deployment, reproducibility, and resource elasticity. vHPC is immediately viable for burst computing, education and training, benchmarking, development workflows, and multi-site federation. Network optimization through software approaches or hardware solutions offers pathways to reduce the performance gap. The InHPC-DE project of GCS (Gauss Centre for Supercomputing) validates technical feasibility of large-scale vHPC adoption for building a federated infrastructure. Future work will extend evaluation to GPU-accelerated workloads, container-based isolation strategies, and cross-provider workload migration capabilities.

Contributors:

Format

on-demandon-site