Portable GPU Kernel Acceleration for Biological Foundation Models & Algorithm Using OpenAI Triton

Wednesday, June 24, 2026 3:45 PM to 5:15 PM · 1 hr. 30 min. (Europe/Berlin)

Foyer D-G - 2nd Floor

Research Poster

Bioinformatics and Life SciencesIndustrial Use Cases of HPC, ML and QCML Model OptimizationOptimizing for Energy and PerformancePerformance and Resource Modeling

Information

Poster is on display and will be presented at the poster pitch session.

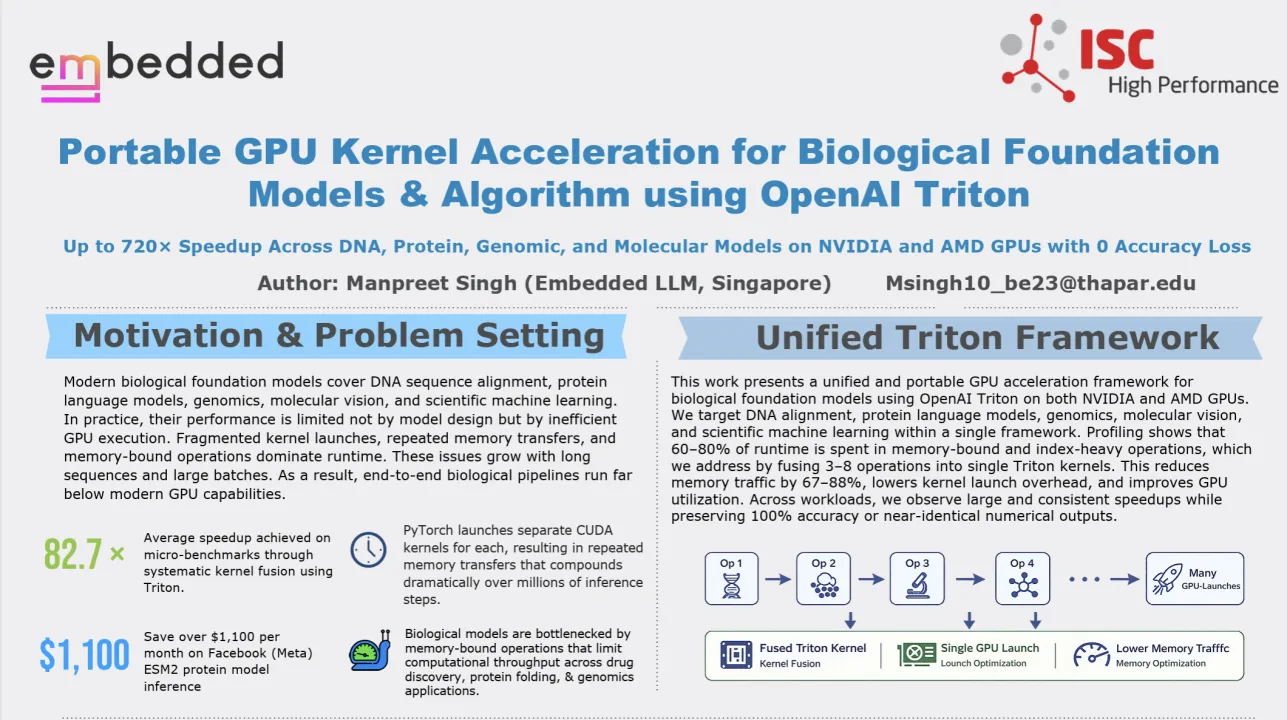

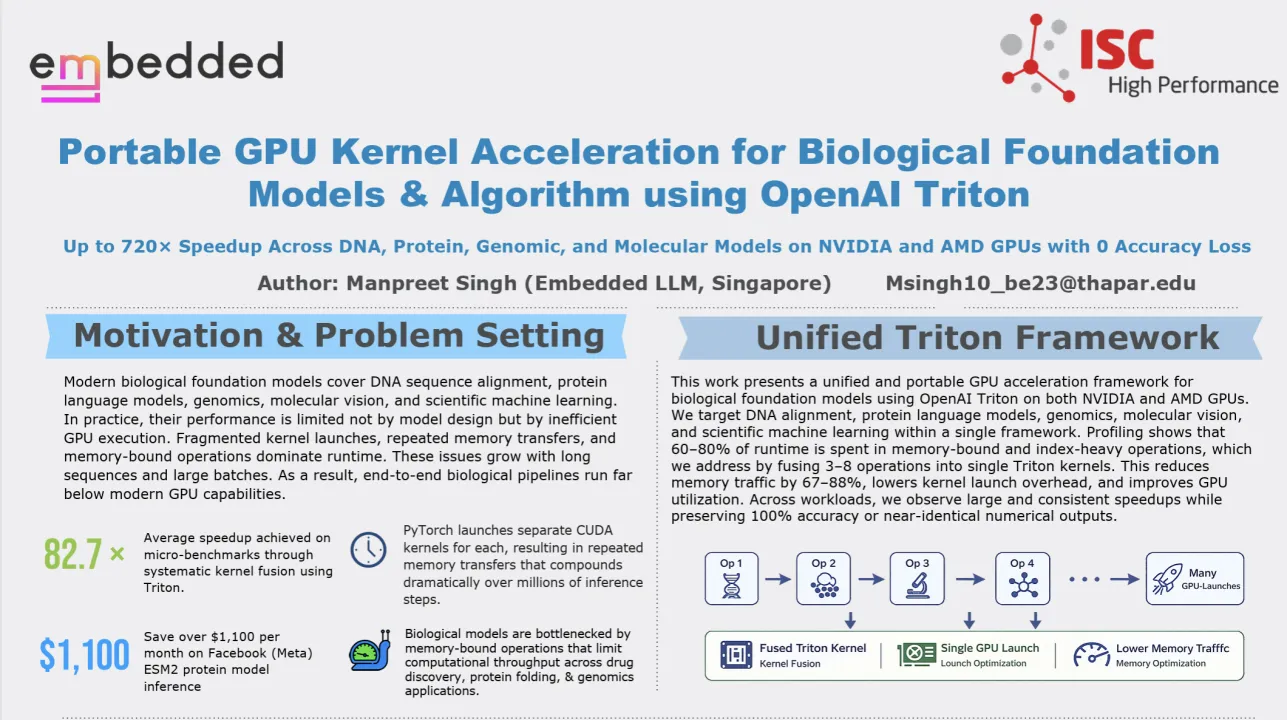

Modern biological foundation models and algorithms are central to applications in genomics, protein science, drug discovery, and molecular analysis. While model architectures have advanced rapidly, practical performance is increasingly constrained by inefficient GPU execution. Profiling across representative workloads shows that 60 to 80 percent of runtime is dominated by memory-bound operations, fragmented kernel launches, and repeated device memory transfers. These inefficiencies worsen with long biological sequences and large batch sizes, leaving modern GPUs significantly underutilized.

This poster presents a unified and portable GPU acceleration framework for biological foundation models and algorithms using OpenAI Triton. Rather than optimizing individual models in isolation, we target shared computational patterns across biology workloads, including dynamic programming, attention mechanisms, index-heavy tensor operations, and normalization layers. Our approach systematically fuses 3 to 8 PyTorch operations into single Triton kernels, explicitly managing memory reuse and kernel launch structure. The same Triton kernels run unmodified on both NVIDIA and AMD GPUs, enabling performance portability without vendor-specific CUDA rewrites, while preserving numerical correctness.

We evaluate the framework across DNA sequence alignment, protein language models, large-context genomic transformers, and molecular vision tasks. A Triton reimplementation of the Needleman–Wunsch global DNA alignment algorithm achieves extreme acceleration, with an average speedup of 388× and a maximum speedup of 489× on AMD Instinct MI300X, and up to 720× speedup on NVIDIA GPUs. Peak throughput reaches 99,513 sequences per second with sustained compute of 1.7 GOPS, while producing identical alignment scores.

For protein language models, Triton-based kernel fusion delivers large and consistent gains. RostLab’s ProtBERT achieves an average speedup of 3.6× with 100 percent identical masked token predictions and a 72.2 percent reduction in GPU hours. Meta’s ESM2 protein model achieves an average speedup of 43.1×, with a 97.7 percent reduction in inference latency and up to 68.9 percent lower memory usage, translating to cloud cost savings exceeding $1,100 per GPU per month on AWS L4 instances.

We further apply the framework to large genomic transformers and molecular vision models. For Enformer at a 196K sequence length, Triton delivers up to 1.82× speedup with cosine similarity above 0.99999. For AlphaGenome, we observe a 5.05× speedup with near-perfect numerical agreement. Triton-optimized MolScribe inference achieves up to 1.57× faster execution with 100 percent identical SMILES outputs.

Overall, this work demonstrates that systematic GPU kernel fusion and memory-aware execution provide a powerful and portable path to scaling biological foundation models, delivering speedups ranging from multi-fold improvements to over 700× acceleration without altering model architectures or scientific outputs.

Modern biological foundation models and algorithms are central to applications in genomics, protein science, drug discovery, and molecular analysis. While model architectures have advanced rapidly, practical performance is increasingly constrained by inefficient GPU execution. Profiling across representative workloads shows that 60 to 80 percent of runtime is dominated by memory-bound operations, fragmented kernel launches, and repeated device memory transfers. These inefficiencies worsen with long biological sequences and large batch sizes, leaving modern GPUs significantly underutilized.

This poster presents a unified and portable GPU acceleration framework for biological foundation models and algorithms using OpenAI Triton. Rather than optimizing individual models in isolation, we target shared computational patterns across biology workloads, including dynamic programming, attention mechanisms, index-heavy tensor operations, and normalization layers. Our approach systematically fuses 3 to 8 PyTorch operations into single Triton kernels, explicitly managing memory reuse and kernel launch structure. The same Triton kernels run unmodified on both NVIDIA and AMD GPUs, enabling performance portability without vendor-specific CUDA rewrites, while preserving numerical correctness.

We evaluate the framework across DNA sequence alignment, protein language models, large-context genomic transformers, and molecular vision tasks. A Triton reimplementation of the Needleman–Wunsch global DNA alignment algorithm achieves extreme acceleration, with an average speedup of 388× and a maximum speedup of 489× on AMD Instinct MI300X, and up to 720× speedup on NVIDIA GPUs. Peak throughput reaches 99,513 sequences per second with sustained compute of 1.7 GOPS, while producing identical alignment scores.

For protein language models, Triton-based kernel fusion delivers large and consistent gains. RostLab’s ProtBERT achieves an average speedup of 3.6× with 100 percent identical masked token predictions and a 72.2 percent reduction in GPU hours. Meta’s ESM2 protein model achieves an average speedup of 43.1×, with a 97.7 percent reduction in inference latency and up to 68.9 percent lower memory usage, translating to cloud cost savings exceeding $1,100 per GPU per month on AWS L4 instances.

We further apply the framework to large genomic transformers and molecular vision models. For Enformer at a 196K sequence length, Triton delivers up to 1.82× speedup with cosine similarity above 0.99999. For AlphaGenome, we observe a 5.05× speedup with near-perfect numerical agreement. Triton-optimized MolScribe inference achieves up to 1.57× faster execution with 100 percent identical SMILES outputs.

Overall, this work demonstrates that systematic GPU kernel fusion and memory-aware execution provide a powerful and portable path to scaling biological foundation models, delivering speedups ranging from multi-fold improvements to over 700× acceleration without altering model architectures or scientific outputs.

Format

on-demandon-site