Making Gravity SWIFTer: GPU Offloads for Gravity in the SWIFT Cosmology Code

Wednesday, June 24, 2026 3:45 PM to 5:15 PM · 1 hr. 30 min. (Europe/Berlin)

Foyer D-G - 2nd Floor

Research Poster

Physics

Information

Poster is on display and will be presented at the poster pitch session.

Modern high-performance computing (HPC) systems are increasingly heterogeneous, combining large CPU clusters with accelerators such as GPUs to improve performance and power efficiency. However, many prominent astronomy codes remain designed for CPU-only architectures and therefore cannot fully exploit these systems. SWIFT (SPH With Inter-dependent Fine-grained Tasking) is one such example, and enabling GPU acceleration within SWIFT is critical for sustaining its scientific productivity on current and future heterogeneous HPC platforms.

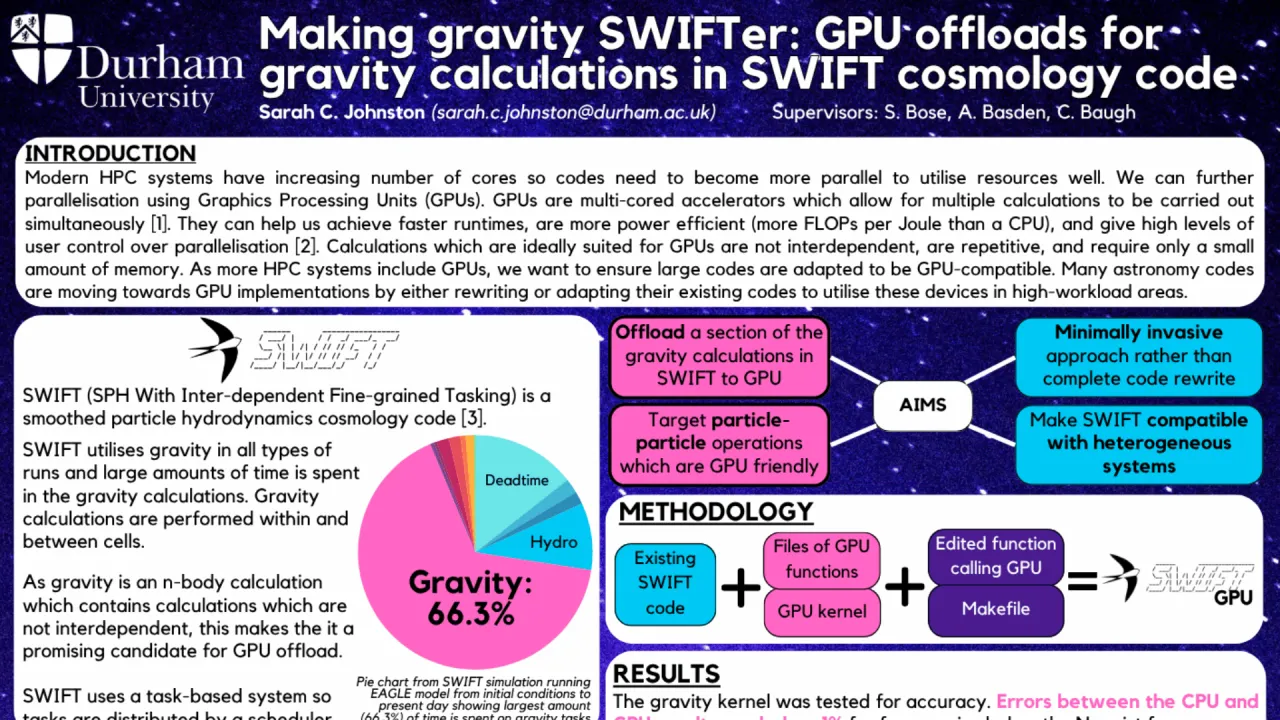

SWIFT is a versatile open-source cosmology code used to model a wide range of astrophysical scenarios, from large-scale structure formation to planetary formation. It employs task-based parallelism, decomposing the workload into fine-grained independent tasks managed by a scheduler for efficient CPU utilisation, and is highly optimised for memory-intensive, CPU-only clusters. A significant fraction of SWIFT’s runtime is spent on gravitational calculations. In gravity N-body simulations, particles interact through gravitational forces in a highly repetitive and largely independent manner, making these calculations well suited to GPU acceleration. Within SWIFT, gravity is decomposed into fine-grained ‘self’ and ‘pair’ tasks acting on particles within a cell and between neighbouring cells, respectively, resulting in many independent gravity tasks per timestep.

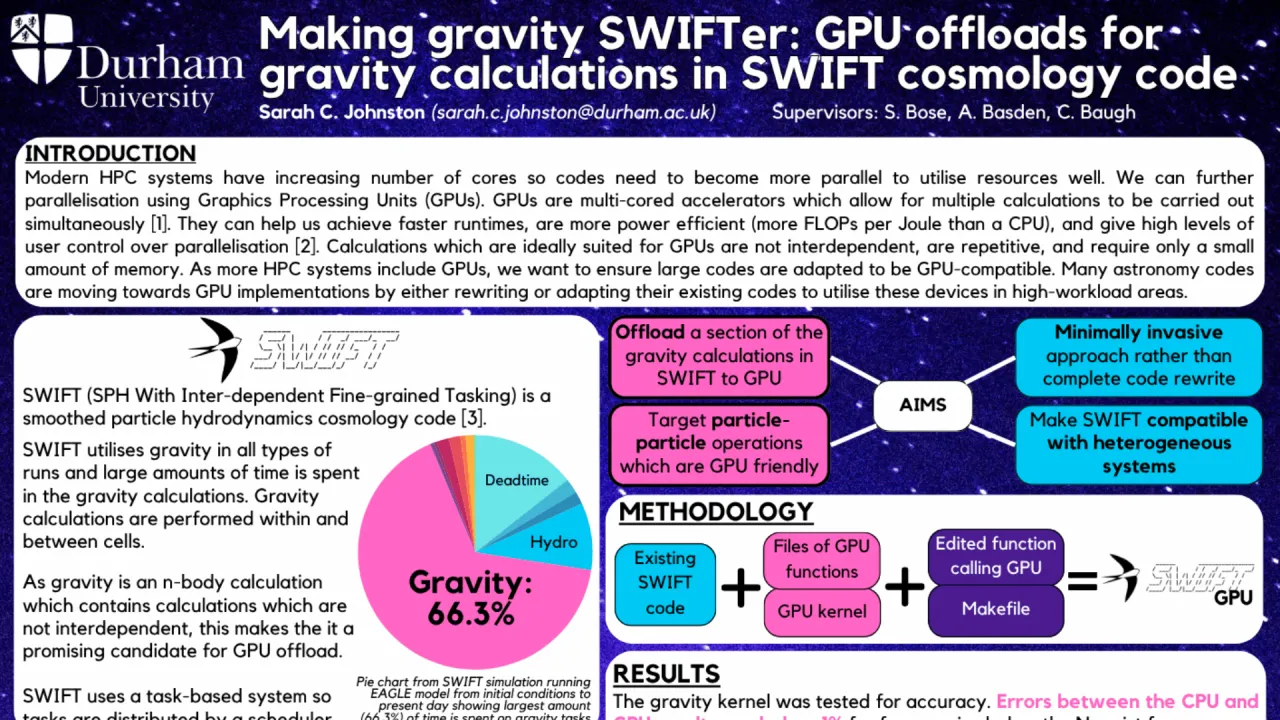

In this work, we address the challenge of enabling GPU acceleration in SWIFT while preserving its existing task-based design. Rather than performing a complete code redesign, we replace the CPU-based gravity calculations with newly developed CUDA kernels, creating a hybrid C/CUDA implementation that offloads gravity to the GPU while leaving the remainder of the code largely unchanged. This minimises disruption to existing SWIFT functionality, allows continued use of the scheduler, and enables the CPU to execute non-gravity tasks concurrently. The GPU kernels achieve high numerical accuracy, with deviations of less than 1% compared to the CPU implementation, confined to scales below the Nyquist frequency where numerical resolution effects dominate. Using these kernels, we reproduce key physical outcomes, including particle distributions and dark matter halo formation.

A major limitation of this approach is the overhead associated with CPU–GPU memory transfers. To mitigate this, we introduce a novel, scheduler-compatible task-bundling mechanism that groups multiple gravity tasks into larger batches. These bundled tasks are packed into contiguous arrays and processed in a single kernel invocation, increasing GPU occupancy and amortising data-transfer costs by replacing many small kernel launches with fewer, larger ones.

To further exploit GPU parallelism, we explore a redistribution of gravity calculations. While the CPU version of SWIFT uses particle–multipole approximations to reduce computational cost, particle–particle interactions are more accurate and map naturally to GPU architectures. We therefore investigate replacing selected particle–multipole interactions with particle–particle calculations, improving physical accuracy while increasing GPU utilisation.

Although the current implementation does not yet deliver an end-to-end performance improvement due to data-movement overheads, it demonstrates the viability of GPU offloading for gravity in SWIFT and identifies key architectural bottlenecks. The focus of this work is on correctness, integration, and architectural feasibility, providing a proof of concept and a foundation for future performance and energy-efficiency optimisation on heterogeneous HPC systems.

Modern high-performance computing (HPC) systems are increasingly heterogeneous, combining large CPU clusters with accelerators such as GPUs to improve performance and power efficiency. However, many prominent astronomy codes remain designed for CPU-only architectures and therefore cannot fully exploit these systems. SWIFT (SPH With Inter-dependent Fine-grained Tasking) is one such example, and enabling GPU acceleration within SWIFT is critical for sustaining its scientific productivity on current and future heterogeneous HPC platforms.

SWIFT is a versatile open-source cosmology code used to model a wide range of astrophysical scenarios, from large-scale structure formation to planetary formation. It employs task-based parallelism, decomposing the workload into fine-grained independent tasks managed by a scheduler for efficient CPU utilisation, and is highly optimised for memory-intensive, CPU-only clusters. A significant fraction of SWIFT’s runtime is spent on gravitational calculations. In gravity N-body simulations, particles interact through gravitational forces in a highly repetitive and largely independent manner, making these calculations well suited to GPU acceleration. Within SWIFT, gravity is decomposed into fine-grained ‘self’ and ‘pair’ tasks acting on particles within a cell and between neighbouring cells, respectively, resulting in many independent gravity tasks per timestep.

In this work, we address the challenge of enabling GPU acceleration in SWIFT while preserving its existing task-based design. Rather than performing a complete code redesign, we replace the CPU-based gravity calculations with newly developed CUDA kernels, creating a hybrid C/CUDA implementation that offloads gravity to the GPU while leaving the remainder of the code largely unchanged. This minimises disruption to existing SWIFT functionality, allows continued use of the scheduler, and enables the CPU to execute non-gravity tasks concurrently. The GPU kernels achieve high numerical accuracy, with deviations of less than 1% compared to the CPU implementation, confined to scales below the Nyquist frequency where numerical resolution effects dominate. Using these kernels, we reproduce key physical outcomes, including particle distributions and dark matter halo formation.

A major limitation of this approach is the overhead associated with CPU–GPU memory transfers. To mitigate this, we introduce a novel, scheduler-compatible task-bundling mechanism that groups multiple gravity tasks into larger batches. These bundled tasks are packed into contiguous arrays and processed in a single kernel invocation, increasing GPU occupancy and amortising data-transfer costs by replacing many small kernel launches with fewer, larger ones.

To further exploit GPU parallelism, we explore a redistribution of gravity calculations. While the CPU version of SWIFT uses particle–multipole approximations to reduce computational cost, particle–particle interactions are more accurate and map naturally to GPU architectures. We therefore investigate replacing selected particle–multipole interactions with particle–particle calculations, improving physical accuracy while increasing GPU utilisation.

Although the current implementation does not yet deliver an end-to-end performance improvement due to data-movement overheads, it demonstrates the viability of GPU offloading for gravity in SWIFT and identifies key architectural bottlenecks. The focus of this work is on correctness, integration, and architectural feasibility, providing a proof of concept and a foundation for future performance and energy-efficiency optimisation on heterogeneous HPC systems.

Format

on-demandon-site