Accelerating Large-Scale Federated Graph Foundation Model Pre-Training for Secure Sensing via Hierarchical Parallelism

Wednesday, June 24, 2026 3:45 PM to 5:15 PM · 1 hr. 30 min. (Europe/Berlin)

Foyer D-G - 2nd Floor

Research Poster

AI Applications powered by HPC TechnologiesApplication Workflows for DiscoveryDigital Twins and MLHPC Simulations enhanced by Machine LearningML Systems and Frameworks

Information

Poster is on display and will be presented at the poster pitch session.

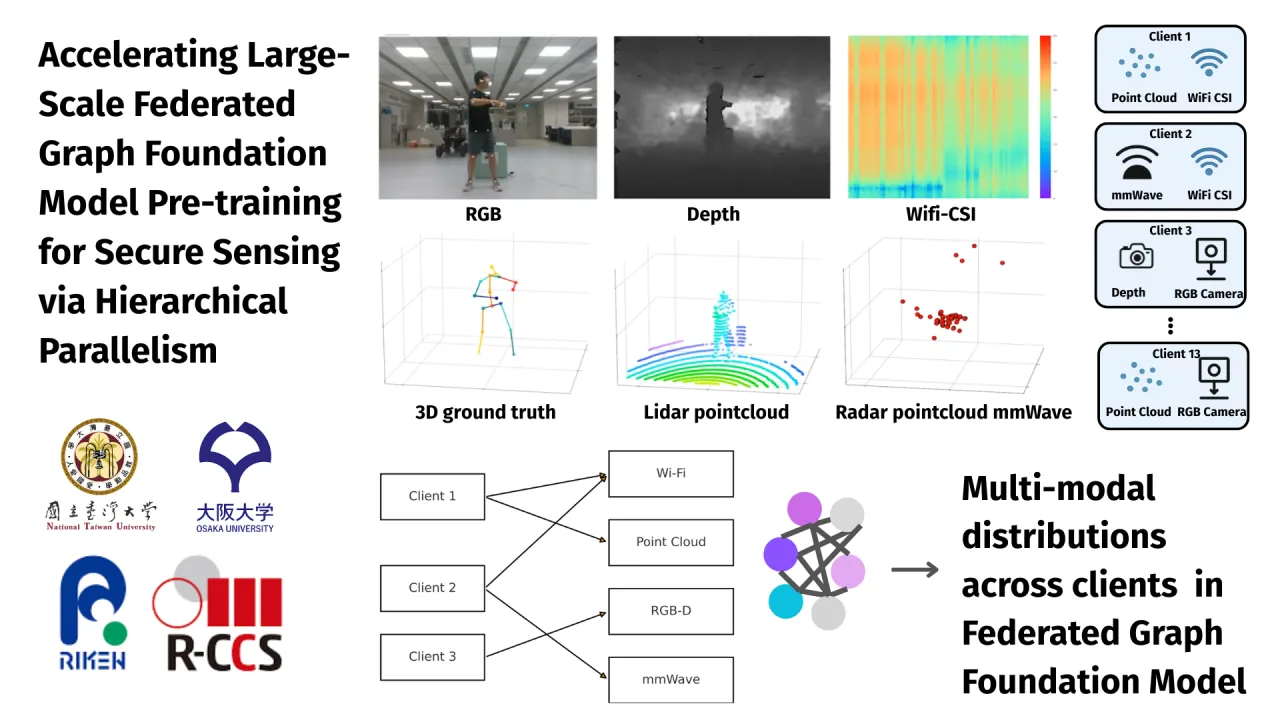

Developing generalized Foundation Models for smart city sensing requires processing exabytes of data from disparate sources like Wi-Fi, LiDAR, and Radar. However, there are two problems: (a) privacy constraints prevent centralizing this data, while (b) the sheer model size makes standard Federated Learning (FL) inefficient. We introduce HPC-FedGraph, a scalable framework designed to bridge the gap between distributed edge data and centralized supercomputing power. HPC-FedGraph fundamentally reimagines the FL pipeline for High-Performance Computing (HPC) environments. We propose a split-computing design where edge devices function as Graph Encoders, converting raw sensor data into privacy-preserving, encrypted graph patches. These lightweight embeddings are streamed to a central supercomputer (validated on Fugaku), which orchestrates the heavy lifting. Unlike traditional FL that idles while waiting for devices, our framework employs Hierarchical Parallelism (MPI+OpenMP) and an asynchronous buffering strategy to maximize compute node utilization. This allows us to train massive Graph Transformer models using Pipeline Parallelism across thousands of nodes without direct data access. Our evaluation demonstrates that HPC-FedGraph achieves near-linear scalability and a 15x improvement in training throughput compared to traditional federated baselines. By effectively leveraging HPC infrastructure for aggregation and optimization, we enable the creation of high-fidelity, privacy-preserving Digital Twin models that were previously computationally infeasible.

Contributors:

Developing generalized Foundation Models for smart city sensing requires processing exabytes of data from disparate sources like Wi-Fi, LiDAR, and Radar. However, there are two problems: (a) privacy constraints prevent centralizing this data, while (b) the sheer model size makes standard Federated Learning (FL) inefficient. We introduce HPC-FedGraph, a scalable framework designed to bridge the gap between distributed edge data and centralized supercomputing power. HPC-FedGraph fundamentally reimagines the FL pipeline for High-Performance Computing (HPC) environments. We propose a split-computing design where edge devices function as Graph Encoders, converting raw sensor data into privacy-preserving, encrypted graph patches. These lightweight embeddings are streamed to a central supercomputer (validated on Fugaku), which orchestrates the heavy lifting. Unlike traditional FL that idles while waiting for devices, our framework employs Hierarchical Parallelism (MPI+OpenMP) and an asynchronous buffering strategy to maximize compute node utilization. This allows us to train massive Graph Transformer models using Pipeline Parallelism across thousands of nodes without direct data access. Our evaluation demonstrates that HPC-FedGraph achieves near-linear scalability and a 15x improvement in training throughput compared to traditional federated baselines. By effectively leveraging HPC infrastructure for aggregation and optimization, we enable the creation of high-fidelity, privacy-preserving Digital Twin models that were previously computationally infeasible.

Contributors:

Format

on-demandon-site